Just think. You are typing a short sentence into a prompt box of an AI tool, and within a few seconds, you are able to see a complete cinematic scene in full color on your screen. Not the messed up, distorting animation as we saw in the previous years, where characters had six fingers, and the background was melting. We are now looking at systems that are like virtual directors. It is an insane change for anyone who is involved in digital content.

The Seedance AI by ByteDance is one of the many tools that recently launched into the market. Unlike many tools, it does not only focus on automating motion. It is designed to support real cinematic storytelling, including native audio and multi-shot continuity.

Now, we can lift the lid and see how this Cinematic AI Model actually functions, what its origins are, the huge controversy it caused a few months back, and how you can actually make it work in a creative workflow.

The Shift towards Cinematic AI

Generative AI has, in the last several years, rapidly expanded beyond text generation to image generation, and now, into video generation. Early systems were only able to create basic animations or brief videos, but current systems are quickly becoming on par with cinema quality.

AI video generation technology is seeing heavy investment from companies worldwide. Some of the most popular models in this space include:

| Sora | A model that can produce realistic videos based on text prompts. |

| Veo | A model intended to shoot longer movies. |

| Runway Gen Models | A model used by many creators and editors. |

| Pika | A model that specializes in creative animation and stylized video. |

| Seedance | A cinema-based, multi-shot story model. |

These models are established as a result of a wider trend change in creative technology. Current tools are trying to squeeze the customary functions of a director, camera operator, sound designer, and animator into a single interface. The idea is to read between the lines of your artistic commands to create full visual stories that look like they belong in a theater. Seedance belongs to this new generation of tools that help introduce film-style creativity.

What Is Seedance AI?

Seedance is essentially a generative foundation model created by ByteDance. It is the result of two of their previous development branches: PixelDance and Seaweed. By combining the capabilities of PixelDance with Seaweed, ByteDance created an enormous powerhouse that can transform text, pictures, or other references into highly enriched video clips.

After you feed in a prompt, the system examines your input to determine the setting, the objects, and the desired camera movement. Then, it builds the images step-by-step with the help of advanced neural networks. The general process of creating a video using Seedance usually consists of the following steps:

- The user enters a prompt in natural language describing their scene.

- The AI analyzes the description to recognize visual elements.

- A generative model creates single frames that describe the scene.

- The frames are stitched together to create a video sequence.

Since the system creates frames progressively, it has the ability to mimic movement, lighting changes, and camera motion.

Seedance Model Versions

Seedance has gone through multiple iterations, like other AI systems, as researchers continually refine its structure and training procedures.

Seedance v1.0

The first version of Seedance was focused on simple video creation abilities. Even though these early designs were spectacular at creating experimental videos, they still struggled with frame consistency and complicated motion sequences. The most important characteristics were:

- Text-to-video generation

- Short cinematic clips

- Multi-shot scene creation

- Increased real-time interpretation

Seedance v1.5

Subsequent developments saw the inclusion of improved video realism and motion continuity. Some versions also experimented with audio-visual synchronization, allowing sound and motion to align more closely. These updates focused on:

- Smoother object movement

- Stabilized scene composition

Better interpretation of prompts

Seedance v2.0

Latest versions focus more on multimodal video generation, meaning the system is capable of understanding several types of inputs simultaneously. The model can create better videos by combining various media to fit the exact style and narrative structure desired by a creator. Possible inputs include:

- Text prompts

- Reference images

- Video clips

- Audio inputs

Core Features of Seedance AI

Seedance also offers a number of features to facilitate creative processes and cinematic narration.

Text Generation

The most frequent method for making videos through AI is via text prompts. A user describes a scene using natural language, and the system creates a visual representation.

Example prompt: A city skyline of the future at sunset, flying cars soaring between towering lights, cinematic lighting, slow-moving aerial camera shots.

Based on this description, the AI creates a video capturing the surroundings and movement. Text-to-video generation enables creators to rapidly explore several ideas without needing classic movie equipment.

Image Animation

Still images can also be animated by Seedance. The user uploads a picture or graphic depiction, and the AI introduces movement into the scene. This attribute is frequently utilized for:

- Animating concept art

- Bringing characters to life

- Introducing movement to digital paintings

- Generating short visual sequences based on stationary images

These image-to-video workflows are especially handy for designers who already possess visual assets.

Multi-Shot Video Generation

Early AI models produced single clips that could not be structured into scenes. Seedance tries to overcome this shortcoming by creating multi-shot sequences. This method enables the AI to produce videos that look like mini-movies rather than isolated clips. For example, a single prompt could generate:

- An establishing shot of the scenery.

- A closer shot of a character.

- A traveling camera shot through the scenery.

Scene Consistency and Character Consistency

One of the most difficult issues in generative video is maintaining consistency between frames. This stability helps produce videos that feel more natural and less disjointed. Seedance works to preserve:

- Character identity

- Lighting conditions

- Environmental structure

- Camera perspective

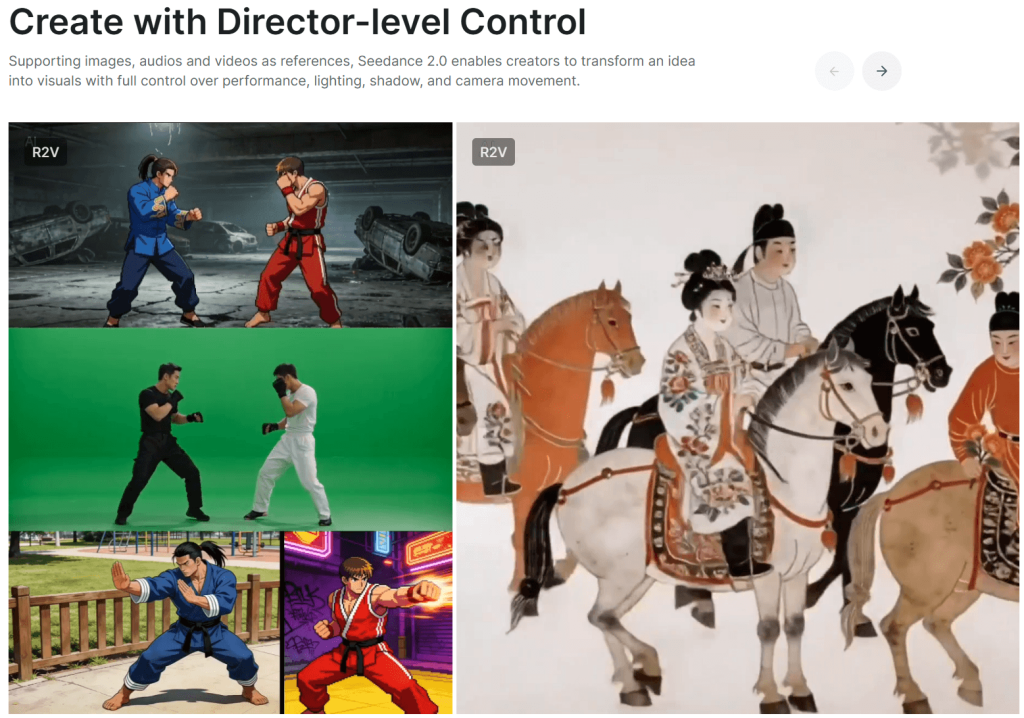

Creative Control at the Director Level

The ability to simulate filmmaking techniques is one of the most fascinating components of modern AI video tools. Instructions in Seedance can control:

- Camera movement

- Lighting style

- Lens effects

- Scene composition

For example, prompts can be used to describe actions:

- Camera movement: A slow-paced drone shot flying over a mountain valley at sunrise.

- Lighting: Dramatic lighting with soft golden shadows.

- Motion: A figure walking slowly through falling snow.

Defining these specific features allows creators to direct the AI to generate scenes resembling professional filmmaking. This level of control is why many individuals now refer to AI video tools as virtual filmmakers instead of mere animation engines.

Multimodal Inputs

Multimodal learning has become one of the most significant advancements in current generative AI. Multimodal models can handle various forms of input simultaneously, such as text and visuals. This technique is integrated into Seedance, allowing creators to combine multiple references during the video-generation process. Combining these inputs gives creators a much higher level of control over the final product. They enable users to control the generation process with far greater specificity. For example:

- A text prompt describes the story.

- An image defines the visual style.

- A video clip directs the movement patterns.

Use Cases

The applications for AI video generation tools are already quite diverse, extending into various creative and professional domains.

- Creators are able to produce short videos on social media, narratives, or experimental projects. Artificial intelligence allows them to experiment with ideas at a low cost without expensive production systems.

- Brands can use AI video makers to make concept videos, promotional imagery, or product demonstrations. Although AI content might not be used in the production of entire videos, it can be used to quickly prototype ideas.

- AI tools can be used by filmmakers to plan the scenes prior to shooting. This enables directors to play with camera angles, lighting, and the design of the environment.

- Game designers can create cinematic sequences or concept trailers that can help in visualizing a game world before deciding on production.

- Artists are increasingly adopting AI as a legitimate artistic medium. AI video making gives artists the opportunity to explore abstract visual narratives and generative storytelling.

Seedance vs Other Competitors

Seedance appears particularly focused on cinematic composition and prompt-based direction, making it attractive for storytelling and creative experimentation. The future of generative media is being heavily contested by several AI video models, each possessing unique strengths:

| Model | Notable Strength |

| Seedance | cinematic storytelling and scene control |

| Sora | highly realistic video generation |

| Veo | longer and more detailed sequences |

| Runway | integrated editing workflows |

Limitations of Seedance AI

Although such a development is impressive, AI video models continue to encounter a number of technical problems. These constraints are common for the majority of AI video systems, though they are slowly being mitigated as the models continue to improve. Common limitations include:

- Motion artifacts: Objects may move in unrealistic ways or shift randomly.

- Scene consistency issues: Complicated scenes can slightly alter or morph between frames.

- Video length restrictions: Most AI-generated clips are still relatively short.

- High computational requirements: Generating high-quality videos demands massive processing power.

Ethical and Copyright Considerations

The need for responsible implementation of generative AI systems is heavily stressed by scientists and technology professionals. Creators who are using AI video tools must remember not to misuse their work and make sure that their content does not harm audiences or violate any laws and ethical principles.

With the advancements of AI video technology, there are many ethical and legal issues associated with it. Some of them include:

- Copyright problems for the training data.

- The potential to create content that is misleading or deceptive.

- The unauthorized use or impersonation of real people.

- Irresponsible disclosure of AI-generated media.

The Future of AI Video Creation

It can be said that the current state of AI video generation is still in its early stages, yet the rate of innovation implies that there will be some major changes in the future. Instead of replacing traditional filmmaking, these technologies can be creative aids that allow creators to investigate ideas much faster. Some potential updates may include:

- Prolonged and more elaborate video generation.

- Real-time AI video creation.

- Perfected character consistency.

- Interactive storytelling systems.

- AI-assisted filmmaking tools.

Seedance AI represents a novel class of video generation models that are meant to introduce cinematic creativity to AI-generated content. Using a combination of prompt-based storytelling and multimodal inputs, the model illustrates how the development of generative AI is changing from simple visual experiments into mechanisms that are able to generate cohesive narrative sequences.

Despite the current limitations of the technology, the creative workflows will keep evolving radically over the next several years. To designers and filmmakers, applications such as Seedance provide a chance to experiment with a whole new way of visual storytelling.