I have looked at a lot of AI video tools over the past year, and most of them still leave me with the same reaction: impressive output, frustrating workflow.

You type in a prompt, get a flashy clip, and for a moment it feels like the future. Then you try to actually use it. One object in the frame looks wrong. A character changes between shots. The motion breaks when you extend the scene. The dialogue feels flat. The camera move looks cinematic, but it does not really add anything to the moment. Suddenly, what seemed powerful starts to feel brittle.

That is why Wan2.7-Video stands out to me.

What makes it interesting is not simply that it can generate video. At this point, a lot of models can do that. What makes Wan2.7-Video feel different is that it seems to understand a more practical truth: creators do not just need generation. They need control. They need revision. They need continuity. They need a way to shape a scene after the first output instead of constantly starting from scratch.

From that angle, Wan2.7-Video feels less like a pure text-to-video toy and more like a serious attempt at building an actual AI video creation system.

My first impression: this is trying to solve the right problem

The biggest issue with most AI video tools is not that they fail to make pretty clips. The bigger issue is that they are hard to work with once you care about details.

That is where Wan2.7-Video immediately feels more relevant. Based on how it is positioned, the model is not only about generating clips from prompts. It also leans heavily into editing, continuation, reference control, and stronger story-driven direction.

To me, that is the right direction for the category.

The future of AI video is probably not going to be won by whichever model produces the prettiest five-second sample. It will be won by whichever model helps creators make better decisions faster, keep what is already working, and fix what is not.

Wan2.7-Video seems to be built around that idea.

It feels more like a creation tool than a generation engine

This is probably the biggest reason I find it interesting.

Most AI video tools still feel like generation engines. You prompt, reroll, prompt again, reroll again, and hope one version gets close enough. That workflow is fine for experiments, but not great for serious creative work.

Wan2.7-Video appears to approach things differently. Instead of treating video as a final output, it seems to treat video more like something you can keep shaping. That may sound like a small distinction, but in practice it changes the entire experience.

In real production, the first draft is rarely the final one. Sometimes the scene is mostly right, but one detail needs to go. Sometimes the motion works, but the shot should be tighter. Sometimes the performance is close, but the emotional delivery needs more edge or softness. A useful tool should help refine those things instead of making you rebuild the whole clip every time.

That is the impression Wan2.7-Video gives off: less “generate and pray,” more “generate, adjust, and direct.”

The editing angle is where it starts to feel genuinely practical

One of the strongest things here is the emphasis on editing.

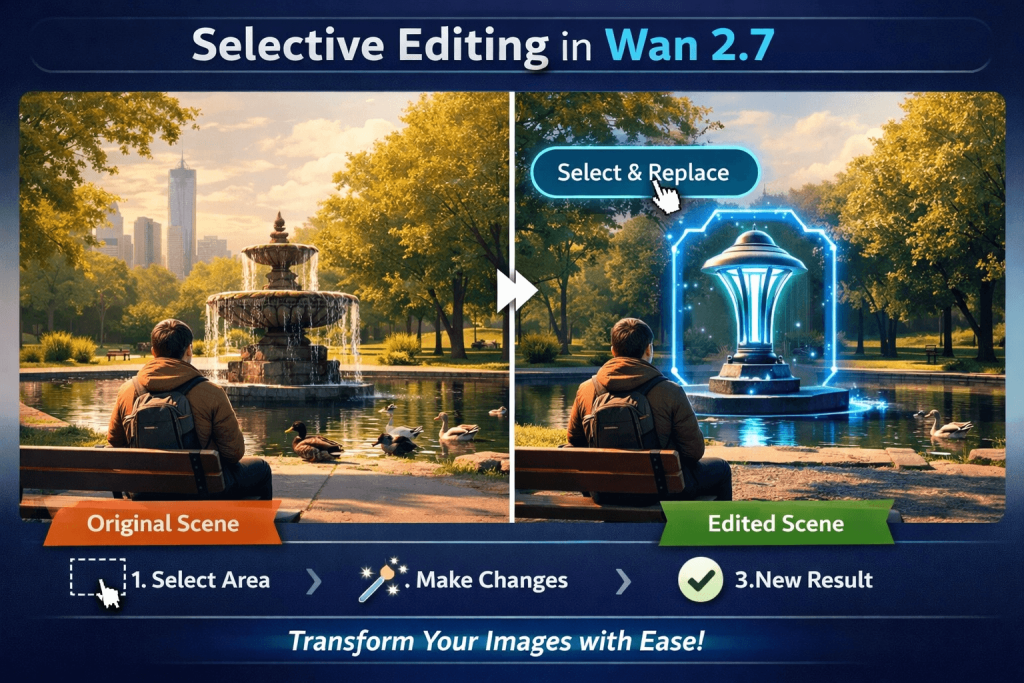

From what I saw in the material, Wan2.7-Video is designed to let users remove, replace, add, or modify local parts of a scene rather than forcing a full regeneration every time. Honestly, that alone is one of the most promising things about it.

Because that is what real creators want.

If a scene already has the right composition, energy, and timing, nobody wants to throw it away just because one object looks off. You want to keep the shot and fix the issue. Maybe remove a background element. Maybe swap the object in someone’s hand. Maybe change the season, adjust the style, or tweak the focus. Those are the kinds of changes that happen all the time in actual production work.

And in my opinion, tools that support that kind of selective editing will always feel more valuable than tools that only encourage endless rerolls.

Character consistency still matters more than flashy demos

If you use AI video often enough, you learn pretty quickly that consistency is where the real challenge begins.

It is easy to make a single good-looking shot. It is much harder to make a character feel stable across multiple shots, especially once dialogue, expression, and motion are involved. That is where many tools still break down. Faces drift. Outfits change. Voices lose identity. The “same character” starts to feel like several near-matches stitched together.

Wan2.7-Video seems to be aiming directly at that issue. It appears to support multiple reference types for characters, including image, video, and audio, with the goal of keeping both appearance and voice more consistent across scenes.

That may not sound glamorous compared to big demo clips, but it is one of the most important things a model can get right.

Because once character consistency improves, a lot of other things become possible. Short-form storytelling gets easier. Brand characters become more usable. Dialogue scenes feel less fragile. Sequences start to hold together emotionally instead of feeling like isolated visual tricks.

I also like that it seems to care about performance, not just visuals

One thing I have noticed with many AI video tools is that they can look good and still feel lifeless.

The lighting is fine. The motion is smooth enough. The scene is technically coherent. But the performance is empty. The expression does not land. The line delivery feels generic. The moment has no emotional weight.

That is why Wan2.7-Video’s focus on actions, dialogue, emotion, and facial expression caught my attention. It suggests the model is not only trying to improve what a scene looks like, but also how it plays.

To me, that is a much bigger deal than another incremental jump in surface realism.

Good video is not just visual. It is emotional. A shot succeeds when the viewer feels the scene, not just when the pixels look polished. If Wan2.7-Video is genuinely stronger at matching dialogue, expression, timing, and scene mood, then it could end up being much more useful than tools that only optimize for glossy output.

The camera control side feels more film-aware than usual

A lot of AI video platforms talk about “cinematic camera movement,” but often that just means they can add motion that looks dramatic on a product page.

What I find more interesting here is that Wan2.7-Video seems to frame camera movement as part of storytelling.

That is the right way to think about it. A push-in should create pressure or intimacy. A reveal shot should change what the viewer understands. A handheld follow should make the moment feel unstable or urgent. Camera movement is not just decoration. It is narrative language.

That is why the mention of more varied and even composite camera techniques stands out. It suggests the model is being developed with some understanding of why a shot moves, not just how.

And honestly, that is one of the differences between video that feels vaguely cinematic and video that feels intentionally directed.

Multimodal input makes this feel closer to how people actually create

Another thing I appreciate is that Wan2.7-Video does not seem locked into text prompts alone.

It appears to work with text, images, video, and audio, and it can also use storyboard-style references to shape a sequence. For me, that is a huge plus, because creators rarely think in one format only.

Sometimes the starting point is a still image. Sometimes it is a reference clip. Sometimes it is a voice sample. Sometimes it is a rough storyboard that captures the pacing better than any prompt could. The more ways a model gives you to communicate intent, the easier it becomes to get something usable.

That is why multimodal input is not just a checklist feature. It is part of what makes a tool feel practical. It reduces the gap between what is in your head and what the model can actually understand.

Scene continuation may be one of the most underrated strengths here

I do not think continuation gets enough attention in AI video, but it should.

A lot of models can make a good short clip. Far fewer can continue that clip without losing the original motion logic, mood, or visual identity. Once you try to extend a scene, everything can start to feel slightly off. The timing changes. The framing drifts. The energy no longer matches.

Wan2.7-Video appears to focus quite a bit on continuation, including better control over how a scene carries forward from existing frames. That is important because continuity is what turns separate generations into an actual video sequence.

If you care about trailers, shorts, ads, or any kind of multi-shot storytelling, this matters a lot more than another isolated demo clip that looks good for six seconds.

What really sets it apart for me is the focus on drama

This might be the most interesting part of Wan2.7-Video.

A lot of AI video products are still obsessed with the obvious metrics: realism, smooth motion, higher detail, more styles. Wan2.7-Video seems to be aiming at something deeper. It appears to be built around the idea that video should be driven by dramatic logic, not just visual polish.

That is a much more mature way to think about the medium.

A scene is not good just because it looks expensive. It works because the lighting, performance, rhythm, framing, and movement all support the emotional core of the moment. If a model understands that, even imperfect outputs can feel more alive. If it does not, even visually beautiful results can still feel hollow.

That is why I find Wan2.7-Video more compelling than the average AI video release. It seems to be trying to understand not just what a scene looks like, but what a scene is doing.

Who I think this is best for

If I had to sum it up simply, I would say Wan2.7-Video looks best suited for creators who care about control more than novelty.

That includes solo creators producing AI videos regularly, marketers testing multiple creative directions, teams working from mixed references, and storytellers who want scenes to connect instead of floating around as disconnected clips.

If all you want is a one-off visual experiment, there are already plenty of tools for that. But if you want to build something with continuity, character stability, editable structure, and stronger shot logic, Wan2.7-Video feels like a more serious option.

My final take

Wan2.7-Video stands out to me because it appears to understand a problem that a lot of AI video tools still avoid.

The problem is not just generation quality. The problem is workflow quality.

Creators do not only need a model that can make a cool clip. They need one that lets them revise, continue, control, and direct that clip without tearing everything down every time something feels wrong.

That is why this model feels promising. Its combination of editing, continuation, multimodal references, character control, performance focus, and stronger dramatic framing points toward something more useful than a standard “prompt in, clip out” system.

Of course, the real test is always hands-on reliability. That is true for every model in this category. But in terms of direction, Wan2.7-Video feels like it is aiming at the right target.

And right now, that may be more important than another flashy demo.

FAQ

What is Wan2.7-Video?

Wan2.7-Video is an AI video model that appears to go beyond standard text-to-video generation by adding editing, continuation, multimodal input, and stronger storytelling-oriented control.

What makes Wan2.7-Video different from other AI video tools?

Its main difference is that it seems designed more like a creation workflow tool than a one-shot generator. The focus on revision, continuity, character consistency, and dramatic control makes it feel more practical.

Can Wan2.7-Video edit existing videos?

Based on the source material, yes. It appears to support local scene editing such as removing, replacing, or modifying specific parts of a video without always requiring a full regeneration.

Does Wan2.7-Video support character consistency?

It appears to support image, video, and audio references for multiple characters, aiming to keep both appearance and voice more stable across scenes.

Is Wan2.7-Video good for storytelling?

It looks especially promising for storytelling because it emphasizes continuity, performance, shot language, and dramatic structure rather than only visual generation quality.

Who should use Wan2.7-Video?

It seems best suited for AI video creators, marketers, creative teams, and storytellers who want more control over editing, continuation, character stability, and scene direction.

Final takeaway: If you are looking for an AI video model that feels closer to a real creative tool than a flashy demo engine, Wan2.7-Video is one of the more interesting ones to watch.